Andorid 카메라 동영상 녹화 예제Android/개념 및 예제2023. 10. 17. 22:19

Table of Contents

반응형

Android 카메라 동영상 녹화 예제 코드입니다.

2020. 12. 27 최초작성

처음 시작시 권한 설정을 허용해야 합니다.

RECORD를 클릭시 카메라 녹화가 시작되며 STOP을 클릭하면 영상이 저장되고 갤러리에서

해당 영상을 찾을 수 있습니다. 영상의 확장자는 MP4입니다.

원본 코드 출처는 아래 주소입니다.

권한 설정 관련 코드가 Deprecated되었는데 해결방법을 찾지못해서

프래그먼트로 작성된 것을 액티비티로 바꾸었습니다.

추가로 영상을 저장시 갤러리에서 찾을 수 있도록 수정했습니다.

https://github.com/googlearchive/android-Camera2Video

테스트 환경

Android Studio 4.1.1

Android 10.0+ ( API 30 )

AndroidManifest.xml

package="com.tistory.webnautes.camera2video"> <uses-permission android:name="android.permission.CAMERA"/> <uses-permission android:name="android.permission.RECORD_AUDIO"/> <uses-permission android:name="android.permission.WRITE_EXTERNAL_STORAGE"/> <application android:requestLegacyExternalStorage="true" android:allowBackup="true" android:icon="@mipmap/ic_launcher" |

MainActivity.java

| package com.tistory.webnautes.camera2video; import androidx.annotation.NonNull; import androidx.appcompat.app.AppCompatActivity; import androidx.core.app.ActivityCompat; import android.Manifest; import android.app.Activity; import android.app.AlertDialog; import android.app.Dialog; import android.app.DialogFragment; import android.app.Fragment; import android.content.Context; import android.content.DialogInterface; import android.content.Intent; import android.content.pm.PackageManager; import android.content.res.Configuration; import android.graphics.Matrix; import android.graphics.RectF; import android.graphics.SurfaceTexture; import android.hardware.camera2.CameraAccessException; import android.hardware.camera2.CameraCaptureSession; import android.hardware.camera2.CameraCharacteristics; import android.hardware.camera2.CameraDevice; import android.hardware.camera2.CameraManager; import android.hardware.camera2.CameraMetadata; import android.hardware.camera2.CaptureRequest; import android.hardware.camera2.params.StreamConfigurationMap; import android.media.MediaRecorder; import android.net.Uri; import android.os.Bundle; import android.os.Environment; import android.os.Handler; import android.os.HandlerThread; import android.util.Log; import android.util.Size; import android.util.SparseIntArray; import android.view.Surface; import android.view.TextureView; import android.view.View; import android.widget.Button; import android.widget.Toast; import java.io.File; import java.io.IOException; import java.util.ArrayList; import java.util.Collections; import java.util.Comparator; import java.util.List; import java.util.concurrent.Semaphore; import java.util.concurrent.TimeUnit; public class MainActivity extends AppCompatActivity { private static final int SENSOR_ORIENTATION_DEFAULT_DEGREES = 90; private static final int SENSOR_ORIENTATION_INVERSE_DEGREES = 270; private static final SparseIntArray DEFAULT_ORIENTATIONS = new SparseIntArray(); private static final SparseIntArray INVERSE_ORIENTATIONS = new SparseIntArray(); private static final String TAG = "Camera2VideoFragment"; private static final int REQUEST_VIDEO_PERMISSIONS = 1; private static final String FRAGMENT_DIALOG = "dialog"; private static final String[] VIDEO_PERMISSIONS = { Manifest.permission.CAMERA, Manifest.permission.RECORD_AUDIO, Manifest.permission.WRITE_EXTERNAL_STORAGE, }; static { DEFAULT_ORIENTATIONS.append(Surface.ROTATION_0, 90); DEFAULT_ORIENTATIONS.append(Surface.ROTATION_90, 0); DEFAULT_ORIENTATIONS.append(Surface.ROTATION_180, 270); DEFAULT_ORIENTATIONS.append(Surface.ROTATION_270, 180); } static { INVERSE_ORIENTATIONS.append(Surface.ROTATION_0, 270); INVERSE_ORIENTATIONS.append(Surface.ROTATION_90, 180); INVERSE_ORIENTATIONS.append(Surface.ROTATION_180, 90); INVERSE_ORIENTATIONS.append(Surface.ROTATION_270, 0); } /** * An {@link AutoFitTextureView} for camera preview. */ private AutoFitTextureView mTextureView; /** * Button to record video */ private Button mButtonVideo; /** * A reference to the opened {@link android.hardware.camera2.CameraDevice}. */ private CameraDevice mCameraDevice; /** * A reference to the current {@link android.hardware.camera2.CameraCaptureSession} for * preview. */ private CameraCaptureSession mPreviewSession; /** * {@link TextureView.SurfaceTextureListener} handles several lifecycle events on a * {@link TextureView}. */ private TextureView.SurfaceTextureListener mSurfaceTextureListener = new TextureView.SurfaceTextureListener() { @Override public void onSurfaceTextureAvailable(SurfaceTexture surfaceTexture, int width, int height) { openCamera(width, height); } @Override public void onSurfaceTextureSizeChanged(SurfaceTexture surfaceTexture, int width, int height) { configureTransform(width, height); } @Override public boolean onSurfaceTextureDestroyed(SurfaceTexture surfaceTexture) { return true; } @Override public void onSurfaceTextureUpdated(SurfaceTexture surfaceTexture) { } }; /** * The {@link android.util.Size} of camera preview. */ private Size mPreviewSize; /** * The {@link android.util.Size} of video recording. */ private Size mVideoSize; /** * MediaRecorder */ private MediaRecorder mMediaRecorder; /** * Whether the app is recording video now */ private boolean mIsRecordingVideo; /** * An additional thread for running tasks that shouldn't block the UI. */ private HandlerThread mBackgroundThread; /** * A {@link Handler} for running tasks in the background. */ private Handler mBackgroundHandler; /** * A {@link Semaphore} to prevent the app from exiting before closing the camera. */ private Semaphore mCameraOpenCloseLock = new Semaphore(1); /** * {@link CameraDevice.StateCallback} is called when {@link CameraDevice} changes its status. */ private CameraDevice.StateCallback mStateCallback = new CameraDevice.StateCallback() { @Override public void onOpened(@NonNull CameraDevice cameraDevice) { mCameraDevice = cameraDevice; startPreview(); mCameraOpenCloseLock.release(); if (null != mTextureView) { configureTransform(mTextureView.getWidth(), mTextureView.getHeight()); } } @Override public void onDisconnected(@NonNull CameraDevice cameraDevice) { mCameraOpenCloseLock.release(); cameraDevice.close(); mCameraDevice = null; } @Override public void onError(@NonNull CameraDevice cameraDevice, int error) { mCameraOpenCloseLock.release(); cameraDevice.close(); mCameraDevice = null; finish(); } }; private Integer mSensorOrientation; private String mNextVideoAbsolutePath; private File mNextVideo; private CaptureRequest.Builder mPreviewBuilder; /** * In this sample, we choose a video size with 3x4 aspect ratio. Also, we don't use sizes * larger than 1080p, since MediaRecorder cannot handle such a high-resolution video. * * @param choices The list of available sizes * @return The video size */ private static Size chooseVideoSize(Size[] choices) { for (Size size : choices) { if (size.getWidth() == size.getHeight() * 4 / 3 && size.getWidth() <= 1080) { return size; } } Log.e(TAG, "Couldn't find any suitable video size"); return choices[choices.length - 1]; } /** * Given {@code choices} of {@code Size}s supported by a camera, chooses the smallest one whose * width and height are at least as large as the respective requested values, and whose aspect * ratio matches with the specified value. * * @param choices The list of sizes that the camera supports for the intended output class * @param width The minimum desired width * @param height The minimum desired height * @param aspectRatio The aspect ratio * @return The optimal {@code Size}, or an arbitrary one if none were big enough */ private static Size chooseOptimalSize(Size[] choices, int width, int height, Size aspectRatio) { // Collect the supported resolutions that are at least as big as the preview Surface List<Size> bigEnough = new ArrayList<>(); int w = aspectRatio.getWidth(); int h = aspectRatio.getHeight(); for (Size option : choices) { if (option.getHeight() == option.getWidth() * h / w && option.getWidth() >= width && option.getHeight() >= height) { bigEnough.add(option); } } // Pick the smallest of those, assuming we found any if (bigEnough.size() > 0) { return Collections.min(bigEnough, new CompareSizesByArea()); } else { Log.e(TAG, "Couldn't find any suitable preview size"); return choices[0]; } } @Override protected void onCreate(Bundle savedInstanceState) { super.onCreate(savedInstanceState); setContentView(R.layout.activity_main); mTextureView = (AutoFitTextureView) findViewById(R.id.texture); mButtonVideo = (Button) findViewById(R.id.video); mButtonVideo.setOnClickListener(new Button.OnClickListener() { @Override public void onClick(View view) { if (mIsRecordingVideo) { stopRecordingVideo(); } else { startRecordingVideo(); } } }); findViewById(R.id.info).setOnClickListener(new Button.OnClickListener() { @Override public void onClick(View view) { AlertDialog show = new AlertDialog.Builder(MainActivity.this) .setMessage("This sample demonstrates how to record video using Camera2 API.") .setPositiveButton(android.R.string.ok, null) .show(); } }); } @Override public void onResume() { super.onResume(); startBackgroundThread(); if (mTextureView.isAvailable()) { openCamera(mTextureView.getWidth(), mTextureView.getHeight()); } else { mTextureView.setSurfaceTextureListener(mSurfaceTextureListener); } } @Override public void onPause() { closeCamera(); stopBackgroundThread(); super.onPause(); } /** * Starts a background thread and its {@link Handler}. */ private void startBackgroundThread() { mBackgroundThread = new HandlerThread("CameraBackground"); mBackgroundThread.start(); mBackgroundHandler = new Handler(mBackgroundThread.getLooper()); } /** * Stops the background thread and its {@link Handler}. */ private void stopBackgroundThread() { mBackgroundThread.quitSafely(); try { mBackgroundThread.join(); mBackgroundThread = null; mBackgroundHandler = null; } catch (InterruptedException e) { e.printStackTrace(); } } /** * Gets whether you should show UI with rationale for requesting permissions. * * @param permissions The permissions your app wants to request. * @return Whether you can show permission rationale UI. */ private boolean shouldShowRequestPermissionRationale(String[] permissions) { for (String permission : permissions) { if (ActivityCompat.shouldShowRequestPermissionRationale(this, permission)) { return true; } } return false; } /** * Requests permissions needed for recording video. */ private void requestVideoPermissions() { if (shouldShowRequestPermissionRationale(VIDEO_PERMISSIONS)) { new ConfirmationDialog().show(getFragmentManager(), FRAGMENT_DIALOG); } else { ActivityCompat.requestPermissions( this, VIDEO_PERMISSIONS, REQUEST_VIDEO_PERMISSIONS); } } @Override public void onRequestPermissionsResult(int requestCode, @NonNull String[] permissions, @NonNull int[] grantResults) { Log.d(TAG, "onRequestPermissionsResult"); if (requestCode == REQUEST_VIDEO_PERMISSIONS) { if (grantResults.length == VIDEO_PERMISSIONS.length) { for (int result : grantResults) { if (result != PackageManager.PERMISSION_GRANTED) { ErrorDialog.newInstance("This sample needs permission for camera and audio recording.") .show(getFragmentManager(), FRAGMENT_DIALOG); break; } } } else { ErrorDialog.newInstance("This sample needs permission for camera and audio recording.") .show(getFragmentManager(), FRAGMENT_DIALOG); } } else { super.onRequestPermissionsResult(requestCode, permissions, grantResults); } } private boolean hasPermissionsGranted(String[] permissions) { for (String permission : permissions) { if (ActivityCompat.checkSelfPermission(this, permission) != PackageManager.PERMISSION_GRANTED) { return false; } } return true; } /** * Tries to open a {@link CameraDevice}. The result is listened by `mStateCallback`. */ @SuppressWarnings("MissingPermission") private void openCamera(int width, int height) { if (!hasPermissionsGranted(VIDEO_PERMISSIONS)) { requestVideoPermissions(); return; } if (isFinishing()) { return; } CameraManager manager = (CameraManager) getSystemService(Context.CAMERA_SERVICE); try { Log.d(TAG, "tryAcquire"); if (!mCameraOpenCloseLock.tryAcquire(2500, TimeUnit.MILLISECONDS)) { throw new RuntimeException("Time out waiting to lock camera opening."); } String cameraId = manager.getCameraIdList()[0]; // Choose the sizes for camera preview and video recording CameraCharacteristics characteristics = manager.getCameraCharacteristics(cameraId); StreamConfigurationMap map = characteristics .get(CameraCharacteristics.SCALER_STREAM_CONFIGURATION_MAP); mSensorOrientation = characteristics.get(CameraCharacteristics.SENSOR_ORIENTATION); if (map == null) { throw new RuntimeException("Cannot get available preview/video sizes"); } mVideoSize = chooseVideoSize(map.getOutputSizes(MediaRecorder.class)); mPreviewSize = chooseOptimalSize(map.getOutputSizes(SurfaceTexture.class), width, height, mVideoSize); int orientation = getResources().getConfiguration().orientation; if (orientation == Configuration.ORIENTATION_LANDSCAPE) { mTextureView.setAspectRatio(mPreviewSize.getWidth(), mPreviewSize.getHeight()); } else { mTextureView.setAspectRatio(mPreviewSize.getHeight(), mPreviewSize.getWidth()); } configureTransform(width, height); mMediaRecorder = new MediaRecorder(); manager.openCamera(cameraId, mStateCallback, null); } catch (CameraAccessException e) { Toast.makeText(MainActivity.this, "Cannot access the camera.", Toast.LENGTH_SHORT).show(); finish(); } catch (NullPointerException e) { // Currently an NPE is thrown when the Camera2API is used but not supported on the // device this code runs. ErrorDialog.newInstance("This device doesn\\'t support Camera2 API.") .show(getFragmentManager(), FRAGMENT_DIALOG); } catch (InterruptedException e) { throw new RuntimeException("Interrupted while trying to lock camera opening."); } } private void closeCamera() { try { mCameraOpenCloseLock.acquire(); closePreviewSession(); if (null != mCameraDevice) { mCameraDevice.close(); mCameraDevice = null; } if (null != mMediaRecorder) { mMediaRecorder.release(); mMediaRecorder = null; } } catch (InterruptedException e) { throw new RuntimeException("Interrupted while trying to lock camera closing."); } finally { mCameraOpenCloseLock.release(); } } /** * Start the camera preview. */ private void startPreview() { if (null == mCameraDevice || !mTextureView.isAvailable() || null == mPreviewSize) { return; } try { closePreviewSession(); SurfaceTexture texture = mTextureView.getSurfaceTexture(); assert texture != null; texture.setDefaultBufferSize(mPreviewSize.getWidth(), mPreviewSize.getHeight()); mPreviewBuilder = mCameraDevice.createCaptureRequest(CameraDevice.TEMPLATE_PREVIEW); Surface previewSurface = new Surface(texture); mPreviewBuilder.addTarget(previewSurface); mCameraDevice.createCaptureSession(Collections.singletonList(previewSurface), new CameraCaptureSession.StateCallback() { @Override public void onConfigured(@NonNull CameraCaptureSession session) { mPreviewSession = session; updatePreview(); } @Override public void onConfigureFailed(@NonNull CameraCaptureSession session) { Toast.makeText(MainActivity.this, "Failed", Toast.LENGTH_SHORT).show(); } }, mBackgroundHandler); } catch (CameraAccessException e) { e.printStackTrace(); } } /** * Update the camera preview. {@link #startPreview()} needs to be called in advance. */ private void updatePreview() { if (null == mCameraDevice) { return; } try { setUpCaptureRequestBuilder(mPreviewBuilder); HandlerThread thread = new HandlerThread("CameraPreview"); thread.start(); mPreviewSession.setRepeatingRequest(mPreviewBuilder.build(), null, mBackgroundHandler); } catch (CameraAccessException e) { e.printStackTrace(); } } private void setUpCaptureRequestBuilder(CaptureRequest.Builder builder) { builder.set(CaptureRequest.CONTROL_MODE, CameraMetadata.CONTROL_MODE_AUTO); } /** * Configures the necessary {@link android.graphics.Matrix} transformation to `mTextureView`. * This method should not to be called until the camera preview size is determined in * openCamera, or until the size of `mTextureView` is fixed. * * @param viewWidth The width of `mTextureView` * @param viewHeight The height of `mTextureView` */ private void configureTransform(int viewWidth, int viewHeight) { if (null == mTextureView || null == mPreviewSize ) { return; } int rotation = getWindowManager().getDefaultDisplay().getRotation(); Matrix matrix = new Matrix(); RectF viewRect = new RectF(0, 0, viewWidth, viewHeight); RectF bufferRect = new RectF(0, 0, mPreviewSize.getHeight(), mPreviewSize.getWidth()); float centerX = viewRect.centerX(); float centerY = viewRect.centerY(); if (Surface.ROTATION_90 == rotation || Surface.ROTATION_270 == rotation) { bufferRect.offset(centerX - bufferRect.centerX(), centerY - bufferRect.centerY()); matrix.setRectToRect(viewRect, bufferRect, Matrix.ScaleToFit.FILL); float scale = Math.max( (float) viewHeight / mPreviewSize.getHeight(), (float) viewWidth / mPreviewSize.getWidth()); matrix.postScale(scale, scale, centerX, centerY); matrix.postRotate(90 * (rotation - 2), centerX, centerY); } mTextureView.setTransform(matrix); } private void setUpMediaRecorder() throws IOException { mMediaRecorder.setAudioSource(MediaRecorder.AudioSource.MIC); mMediaRecorder.setVideoSource(MediaRecorder.VideoSource.SURFACE); mMediaRecorder.setOutputFormat(MediaRecorder.OutputFormat.MPEG_4); File path = new File (Environment.getExternalStorageDirectory().getAbsolutePath() + "/camtest"); if (!path.exists()) { path.mkdirs(); } String fileName = String.format("%d.mp4", System.currentTimeMillis()); mNextVideo = new File(path, fileName); mNextVideoAbsolutePath = mNextVideo.getAbsolutePath(); mMediaRecorder.setOutputFile(mNextVideoAbsolutePath); mMediaRecorder.setVideoEncodingBitRate(10000000); mMediaRecorder.setVideoFrameRate(30); mMediaRecorder.setVideoSize(mVideoSize.getWidth(), mVideoSize.getHeight()); mMediaRecorder.setVideoEncoder(MediaRecorder.VideoEncoder.H264); mMediaRecorder.setAudioEncoder(MediaRecorder.AudioEncoder.AAC); int rotation = getWindowManager().getDefaultDisplay().getRotation(); switch (mSensorOrientation) { case SENSOR_ORIENTATION_DEFAULT_DEGREES: mMediaRecorder.setOrientationHint(DEFAULT_ORIENTATIONS.get(rotation)); break; case SENSOR_ORIENTATION_INVERSE_DEGREES: mMediaRecorder.setOrientationHint(INVERSE_ORIENTATIONS.get(rotation)); break; } mMediaRecorder.prepare(); } private void startRecordingVideo() { if (null == mCameraDevice || !mTextureView.isAvailable() || null == mPreviewSize) { return; } try { closePreviewSession(); setUpMediaRecorder(); SurfaceTexture texture = mTextureView.getSurfaceTexture(); assert texture != null; texture.setDefaultBufferSize(mPreviewSize.getWidth(), mPreviewSize.getHeight()); mPreviewBuilder = mCameraDevice.createCaptureRequest(CameraDevice.TEMPLATE_RECORD); List<Surface> surfaces = new ArrayList<>(); // Set up Surface for the camera preview Surface previewSurface = new Surface(texture); surfaces.add(previewSurface); mPreviewBuilder.addTarget(previewSurface); // Set up Surface for the MediaRecorder Surface recorderSurface = mMediaRecorder.getSurface(); surfaces.add(recorderSurface); mPreviewBuilder.addTarget(recorderSurface); // Start a capture session // Once the session starts, we can update the UI and start recording mCameraDevice.createCaptureSession(surfaces, new CameraCaptureSession.StateCallback() { @Override public void onConfigured(@NonNull CameraCaptureSession cameraCaptureSession) { mPreviewSession = cameraCaptureSession; updatePreview(); runOnUiThread(new Runnable() { @Override public void run() { // UI mButtonVideo.setText("Stop"); mIsRecordingVideo = true; // Start recording mMediaRecorder.start(); } }); } @Override public void onConfigureFailed(@NonNull CameraCaptureSession cameraCaptureSession) { Toast.makeText(MainActivity.this, "Failed", Toast.LENGTH_SHORT).show(); } }, mBackgroundHandler); } catch (CameraAccessException | IOException e) { e.printStackTrace(); } } private void closePreviewSession() { if (mPreviewSession != null) { mPreviewSession.close(); mPreviewSession = null; } } private void stopRecordingVideo() { // UI mIsRecordingVideo = false; mButtonVideo.setText("Record"); // Stop recording mMediaRecorder.stop(); mMediaRecorder.reset(); Intent mediaScanIntent = new Intent( Intent.ACTION_MEDIA_SCANNER_SCAN_FILE); mediaScanIntent.setData(Uri.fromFile(mNextVideo)); sendBroadcast(mediaScanIntent); Toast.makeText(MainActivity.this, "Video saved: " + mNextVideoAbsolutePath, Toast.LENGTH_SHORT).show(); Log.d(TAG, "Video saved: " + mNextVideoAbsolutePath); mNextVideoAbsolutePath = null; startPreview(); } /** * Compares two {@code Size}s based on their areas. */ static class CompareSizesByArea implements Comparator<Size> { @Override public int compare(Size lhs, Size rhs) { // We cast here to ensure the multiplications won't overflow return Long.signum((long) lhs.getWidth() * lhs.getHeight() - (long) rhs.getWidth() * rhs.getHeight()); } } public static class ErrorDialog extends DialogFragment { private static final String ARG_MESSAGE = "message"; public static ErrorDialog newInstance(String message) { ErrorDialog dialog = new ErrorDialog(); Bundle args = new Bundle(); args.putString(ARG_MESSAGE, message); dialog.setArguments(args); return dialog; } @Override public Dialog onCreateDialog(Bundle savedInstanceState) { final Activity activity = getActivity(); return new AlertDialog.Builder(activity) .setMessage(getArguments().getString(ARG_MESSAGE)) .setPositiveButton(android.R.string.ok, new DialogInterface.OnClickListener() { @Override public void onClick(DialogInterface dialogInterface, int i) { activity.finish(); } }) .create(); } } public static class ConfirmationDialog extends DialogFragment { @Override public Dialog onCreateDialog(Bundle savedInstanceState) { final Fragment parent = getParentFragment(); return new AlertDialog.Builder(getActivity()) .setMessage("This sample needs permission for camera and audio recording.") .setPositiveButton(android.R.string.ok, new DialogInterface.OnClickListener() { @Override public void onClick(DialogInterface dialog, int which) { ActivityCompat.requestPermissions( getActivity(), VIDEO_PERMISSIONS, REQUEST_VIDEO_PERMISSIONS); } }) .setNegativeButton(android.R.string.cancel, new DialogInterface.OnClickListener() { @Override public void onClick(DialogInterface dialog, int which) { parent.getActivity().finish(); } }) .create(); } } } |

AutoFitTextureView.java

| package com.tistory.webnautes.camera2video; import android.content.Context; import android.util.AttributeSet; import android.view.TextureView; /** * A {@link TextureView} that can be adjusted to a specified aspect ratio. */ public class AutoFitTextureView extends TextureView { private int mRatioWidth = 0; private int mRatioHeight = 0; public AutoFitTextureView(Context context) { this(context, null); } public AutoFitTextureView(Context context, AttributeSet attrs) { this(context, attrs, 0); } public AutoFitTextureView(Context context, AttributeSet attrs, int defStyle) { super(context, attrs, defStyle); } /** * Sets the aspect ratio for this view. The size of the view will be measured based on the ratio * calculated from the parameters. Note that the actual sizes of parameters don't matter, that * is, calling setAspectRatio(2, 3) and setAspectRatio(4, 6) make the same result. * * @param width Relative horizontal size * @param height Relative vertical size */ public void setAspectRatio(int width, int height) { if (width < 0 || height < 0) { throw new IllegalArgumentException("Size cannot be negative."); } mRatioWidth = width; mRatioHeight = height; requestLayout(); } @Override protected void onMeasure(int widthMeasureSpec, int heightMeasureSpec) { super.onMeasure(widthMeasureSpec, heightMeasureSpec); int width = MeasureSpec.getSize(widthMeasureSpec); int height = MeasureSpec.getSize(heightMeasureSpec); if (0 == mRatioWidth || 0 == mRatioHeight) { setMeasuredDimension(width, height); } else { if (width < height * mRatioWidth / mRatioHeight) { setMeasuredDimension(width, width * mRatioHeight / mRatioWidth); } else { setMeasuredDimension(height * mRatioWidth / mRatioHeight, height); } } } } |

activity_main.xml

| <?xml version="1.0" encoding="utf-8"?> <RelativeLayout xmlns:android="http://schemas.android.com/apk/res/android" android:layout_width="match_parent" android:layout_height="match_parent"> <com.tistory.webnautes.camera2video.AutoFitTextureView android:id="@+id/texture" android:layout_width="wrap_content" android:layout_height="wrap_content" android:layout_alignParentStart="true" android:layout_alignParentTop="true" /> <FrameLayout android:layout_width="match_parent" android:layout_height="wrap_content" android:layout_alignParentBottom="true" android:layout_alignParentStart="true" android:layout_below="@id/texture" android:background="#4285f4"> <Button android:id="@+id/video" android:layout_width="wrap_content" android:layout_height="wrap_content" android:layout_gravity="center" android:text="Record" /> <ImageButton android:id="@+id/info" android:contentDescription="Info" style="@android:style/Widget.Material.Light.Button.Borderless" android:layout_width="wrap_content" android:layout_height="wrap_content" android:layout_gravity="center_vertical|right" android:padding="20dp" android:src="@android:drawable/ic_dialog_info" /> </FrameLayout> </RelativeLayout> |

반응형

'Android > 개념 및 예제' 카테고리의 다른 글

| 안드로이드 카메라 예제 ( 프리뷰 및 사진찍기, android.hardware.camera2 ) (0) | 2023.10.17 |

|---|---|

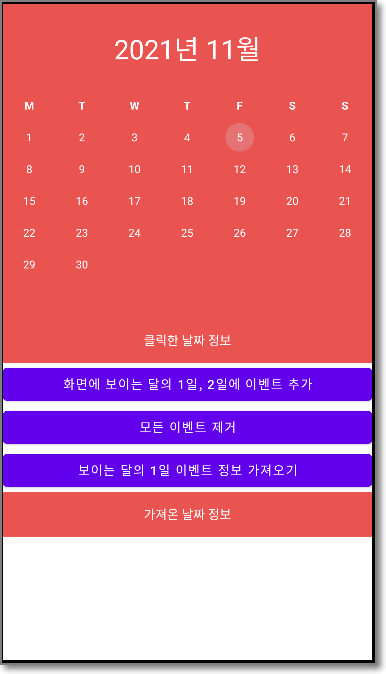

| 이벤트 추가와 날짜 선택 가능한 안드로이드 달력 예제 ( CompactCalendarView 라이브러리) (0) | 2023.10.14 |

| Android와 PC JAVA 프로그램 간 블루투스 통신 예제 (0) | 2023.10.14 |

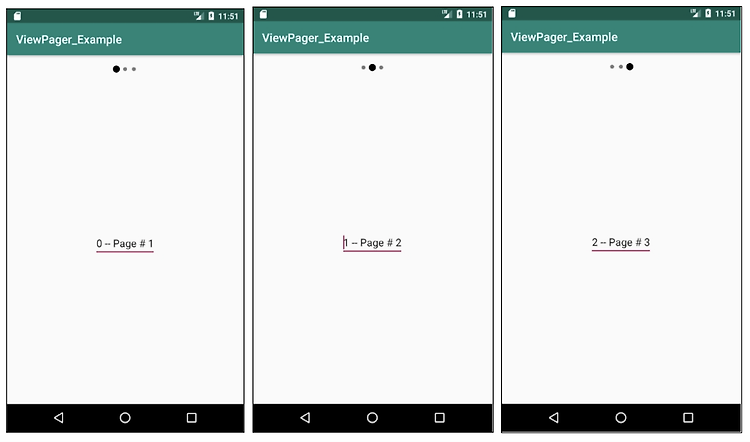

| Android ViewPager 예제 - 좌우로 스와이프로 화면 전환 (0) | 2023.10.14 |

| Android용 HTML 파서(Parser) jsoup 사용해보기 (11) | 2021.01.26 |